Running a node on a blockchain testnet is one of the most practical ways to participate in an emerging Web3 ecosystem. It is how validators earn early reputation and potential mainnet allocations, how developers test smart contracts against realistic network conditions, how infrastructure teams qualify operational tooling before staking real capital, and how community contributors gather points and rewards distributed by protocol teams during incentivized testnet phases. Yet the very first technical decision in this process — what kind of server to rent — is also the one most often made carelessly. Operators frequently underprovision and watch their node fall out of sync, overprovision and waste hundreds of dollars per month, or choose a provider whose network behavior makes the node unreliable regardless of its specifications. The cost of a bad choice is not merely financial; missed blocks, slashing events on proof-of-stake testnets, and accumulated downtime erase the very reputation the node was meant to build.

This article is a practical, in-depth guide to selecting a virtual private server for a testnet validator or full node. It covers how to read the actual hardware requirements of a chain rather than the marketing version, how to interpret VPS specifications honestly, what storage and networking characteristics matter most, and how to size a machine that will remain viable as testnet state grows over weeks and months. For readers who want to evaluate provider options directly, a useful starting point is https://world.hstq.net/servers.html, which lists VPS and dedicated configurations suitable for blockchain workloads. The remainder of this guide explains exactly what to look for when comparing such offerings.

Understanding What a Testnet Node Actually Does

Before specifying hardware, an operator should understand the workload precisely. A blockchain node is not a typical web application. It is a long-running process that maintains an authoritative copy of a distributed ledger by performing several concurrent activities: receiving blocks and transactions from peers over a peer-to-peer network, cryptographically verifying every signature and state transition, executing smart contract code in a virtual machine, writing the resulting state changes to a local database, periodically pruning or compacting that database, and — for validator nodes — signing attestations or proposals that must reach the network within strict time windows.

Each of these activities stresses a different subsystem. Signature verification is CPU-bound and benefits enormously from modern instruction sets. Smart contract execution stresses CPU and memory bandwidth simultaneously. State updates hammer the disk with small random writes. Peer-to-peer gossip and block propagation consume sustained network bandwidth and require low jitter. The mistake most newcomers make is optimizing for one of these dimensions while ignoring the others — typically buying a CPU-rich VPS with slow disks, then watching the node fall behind despite a near-idle processor.

The Core Requirements: CPU, RAM, Storage, Network

Every chain publishes minimum and recommended hardware specifications, but these documents are notoriously optimistic. They describe the configuration on which the node will technically run, not the configuration on which it will run reliably for months under realistic conditions. As a rule of thumb, an experienced operator multiplies the official “recommended” specifications by 1.5 to 2 for testnet nodes that need to remain in sync continuously, and treats the “minimum” specifications as a guarantee of failure.

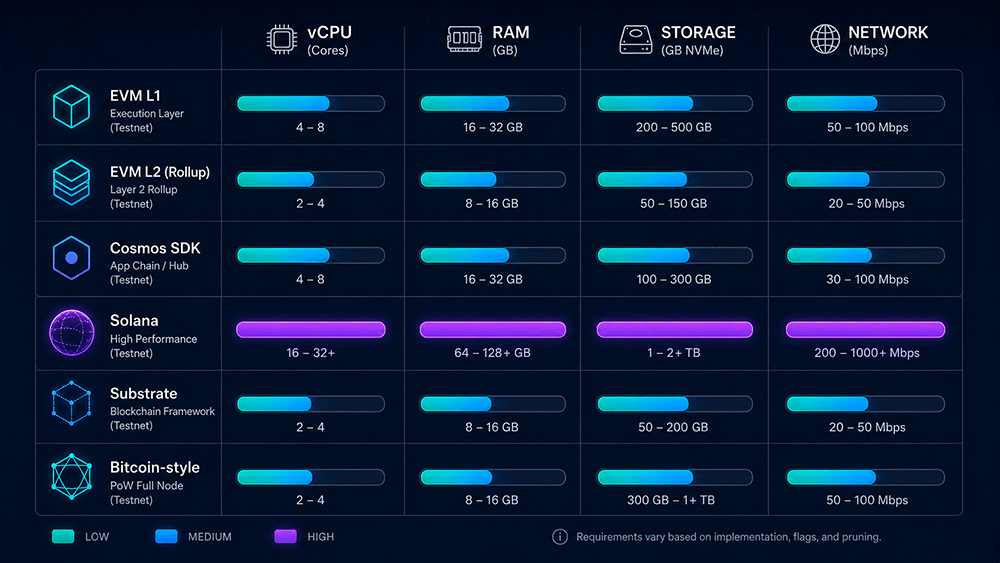

The table below summarizes typical real-world requirements for several popular categories of testnet nodes. These numbers reflect what tends to actually work for an unattended node running for several months as testnet state accumulates, not the day-one minimums.

| Node Type | vCPU | RAM | Storage | Network |

|---|---|---|---|---|

| EVM L1 testnet (e.g., Ethereum Sepolia/Holesky validator) | 4–8 | 16–32 GB | 1–2 TB NVMe | 25 Mbps sustained, unmetered preferred |

| EVM L2 rollup testnet (Optimism, Arbitrum, Base) | 4–8 | 16–32 GB | 500 GB – 1 TB NVMe | 50 Mbps sustained |

| Cosmos SDK chain testnet (Celestia, dYdX, Sei, Injective) | 4–8 | 16–32 GB | 500 GB – 2 TB NVMe | 50–100 Mbps sustained |

| Solana testnet/devnet validator | 16–24 | 128–256 GB | 2 × 2 TB NVMe | 1 Gbps with high PPS capacity |

| Substrate/Polkadot testnet collator | 4–8 | 16–32 GB | 500 GB – 1 TB NVMe | 50 Mbps sustained |

| Bitcoin-style PoW testnet full node | 2–4 | 8–16 GB | 500 GB SSD | 10 Mbps sustained |

| Aptos/Sui Move-based testnet | 8–16 | 32–64 GB | 1–2 TB NVMe | 100 Mbps sustained |

Two patterns are immediately visible. First, almost every modern testnet node demands NVMe storage rather than spinning disk or even SATA SSD — random write IOPS and fsync latency dominate the performance profile of state-tree updates. Second, Solana stands apart as an extreme outlier whose hardware demands resemble a small high-frequency-trading server rather than a typical blockchain node, reflecting its 400 ms block target and parallel transaction execution model.

Why Storage Is the Dimension That Most Often Fails

If a single VPS specification deserves disproportionate attention from a testnet operator, it is storage — specifically, the kind of storage and the IOPS it can sustain. Most blockchain clients use embedded key-value databases such as LevelDB, RocksDB, or Pebble. These engines write small records constantly, perform frequent fsync operations to guarantee durability, and trigger periodic compactions that briefly multiply write amplification. A VPS that advertises “SSD storage” without specifying whether the underlying medium is SATA SSD shared across many tenants, network-attached block storage with throttled IOPS, or local NVMe will deliver wildly different results for the same nominal capacity.

Operators should look for the following storage characteristics, in descending order of importance:

- Local NVMe rather than network-attached storage. Network block storage adds latency that compounds badly with fsync-heavy workloads.

- Dedicated IOPS allocation rather than shared pools. Some providers explicitly guarantee a minimum IOPS figure; others rely on burst credits that exhaust within hours under steady blockchain load.

- Sufficient capacity for two to three times the current chain size, since testnet state often grows faster than mainnet and pruning is sometimes unavailable on incentivized testnets that require archive mode.

- Filesystem flexibility — the ability to run ext4 or XFS, set appropriate mount options, and tune scheduler parameters.

CPU: Cores, Generations, and the AVX Question

Raw core count matters less than is often assumed. Most blockchain clients are not embarrassingly parallel; they have a hot path of sequential block processing that benefits more from high single-thread performance than from many slow cores. A modern CPU generation with high clock speeds often outperforms an older generation with twice the core count for typical EVM workloads.

A few specific CPU features deserve attention. Modern cryptographic operations — particularly those used in zero-knowledge rollups and BLS signature aggregation in Ethereum consensus clients — benefit from AVX2 and increasingly AVX-512 instruction sets. Some chains, notably those built around BLS signatures, run dramatically faster on processors that support these extensions. Before committing to a VPS, an operator should verify the CPU model exposed inside the guest (via cat /proc/cpuinfo on a trial instance) and confirm that the relevant flags are present and not masked by the hypervisor.

RAM: Sizing for State, Not for Idle

Memory requirements grow over time on most chains because state databases benefit from large in-memory caches and because mempool and peer connection overhead expands with network activity. A node that comfortably runs in 8 GB on day one of a testnet may require 16 or 24 GB three months later as state size and peer count grow. Provisioning RAM with at least 50% headroom over current observed usage is the practical minimum.

ECC RAM, which detects and corrects single-bit memory errors, is rarely available on consumer-grade VPS offerings but is standard on dedicated servers. For long-running nodes, this matters: an undetected memory bit-flip during state commit can cause silent database corruption that only manifests weeks later as a consensus failure.

Network: Bandwidth, Stability, and Geographic Position

Validator nodes are penalized for missing attestations or block proposals, which in turn depend on receiving and broadcasting messages within strict time windows. Network quality therefore matters as much as raw bandwidth. The relevant characteristics include:

- Sustained bandwidth rather than burst capacity. Testnets generate continuous gossip traffic.

- Low jitter and stable round-trip times to major peer concentrations.

- Unmetered or generous monthly transfer allowances, since some chains move several terabytes of data per month per node.

- IPv6 support, increasingly required by modern peer-to-peer stacks.

- Geographic diversity from other validators, which improves both decentralization scoring on some testnets and personal resilience to regional outages.

Provider Selection Criteria

Beyond raw specifications, the provider itself matters. The following table summarizes the criteria that distinguish providers genuinely suitable for blockchain workloads from those that merely advertise compatible specifications.

| Criterion | Why It Matters for Nodes |

|---|---|

| Local NVMe disclosure | Confirms storage is not network-attached or shared pool |

| Transparent CPU model | Allows verification of instruction-set support before purchase |

| Unmetered or high-cap bandwidth | Prevents overage charges or throttling mid-month |

| Multiple datacenter regions | Enables relocation if a region becomes congested or restricted |

| Hourly billing or short minimum terms | Allows experimentation before long-term commitment |

| Snapshot and image support | Enables fast recovery from corruption or rapid migration |

| Cryptocurrency or anonymous payment | Useful for operators in regions with payment friction |

| Reasonable abuse policy | Some providers terminate accounts for high outbound packet rates |

Common Mistakes to Avoid

The following mistakes recur with such regularity in operator communities that they deserve explicit mention:

- Choosing a VPS based on advertised vCPU count alone, without checking actual single-thread performance or steal time.

- Using network-attached block storage for state databases, then wondering why fsync latency causes the node to fall behind.

- Ignoring the snapshot/sync-from-genesis distinction; some testnets take days to sync from genesis on slow disks but minutes from a snapshot.

- Underestimating state growth and running out of disk halfway through an incentivized testnet phase.

- Co-locating multiple chains on one VPS to save money, then losing all of them when one chain’s state explosion fills the disk.

- Skipping monitoring. A node that silently falls out of sync for three days because no alerting was configured is far more expensive than the cost of basic Prometheus and Grafana setup.

- Choosing the cheapest provider in a popular region, where oversubscription is highest and noisy-neighbor effects dominate.

A Practical Sizing Workflow

The following workflow produces a defensible VPS specification for almost any testnet node. First, locate the official hardware recommendations published by the protocol team and treat them as a floor, not a ceiling. Second, search community discussions — Discord, forum threads, validator guild documents — for operator reports of actual resource consumption after thirty to ninety days of operation; these are usually substantially higher than the official numbers. Third, multiply the higher of the two figures by 1.5 for storage and RAM, and by 1.25 for CPU, to account for state growth and peer activity over the testnet’s duration. Fourth, verify the candidate VPS provider exposes the CPU model and disk type honestly, ideally by spinning up an hourly instance and running quick benchmarks (fio for disk, sysbench for CPU, iperf3 for network) before committing to a monthly term.

Finally, plan for migration from the start. Even a well-chosen VPS may need to be replaced if the testnet evolves, the provider changes terms, or the project graduates to mainnet. Maintaining configuration in version control, taking regular snapshots of synced state, and documenting the exact provisioning steps transforms an unrecoverable disaster into a routine maintenance event. The operators who succeed across multiple testnet seasons are not the ones who pick the perfect server on the first try; they are the ones who pick a sufficient server, monitor it carefully, and migrate efficiently when the requirements change.